Study: AI models that consider user s feeling are more likely to make errors

The story

Overtuning can cause models to "prioritize user satisfaction over truthfulness.”

From the source

Sections Forum Subscribe Search AI Biz & IT Cars Culture Gaming Health Policy Science Security Space Tech Feature Reviews AI Biz & IT Cars Culture Gaming Health Policy Science Security Space Tech Forum Subscribe Story text Size Small Standard Large Width Standard Wide Links Standard Orange Subscribers only Learn more Pin to story Theme HyperLight Day & Night Dark System Search Sign In Sign in dialog... Sign in Better to be nice than right? Study: AI models that consider user s feeling are more likely to make errors Overtuning can cause models to prioritize user satisfaction over truthfulness.”

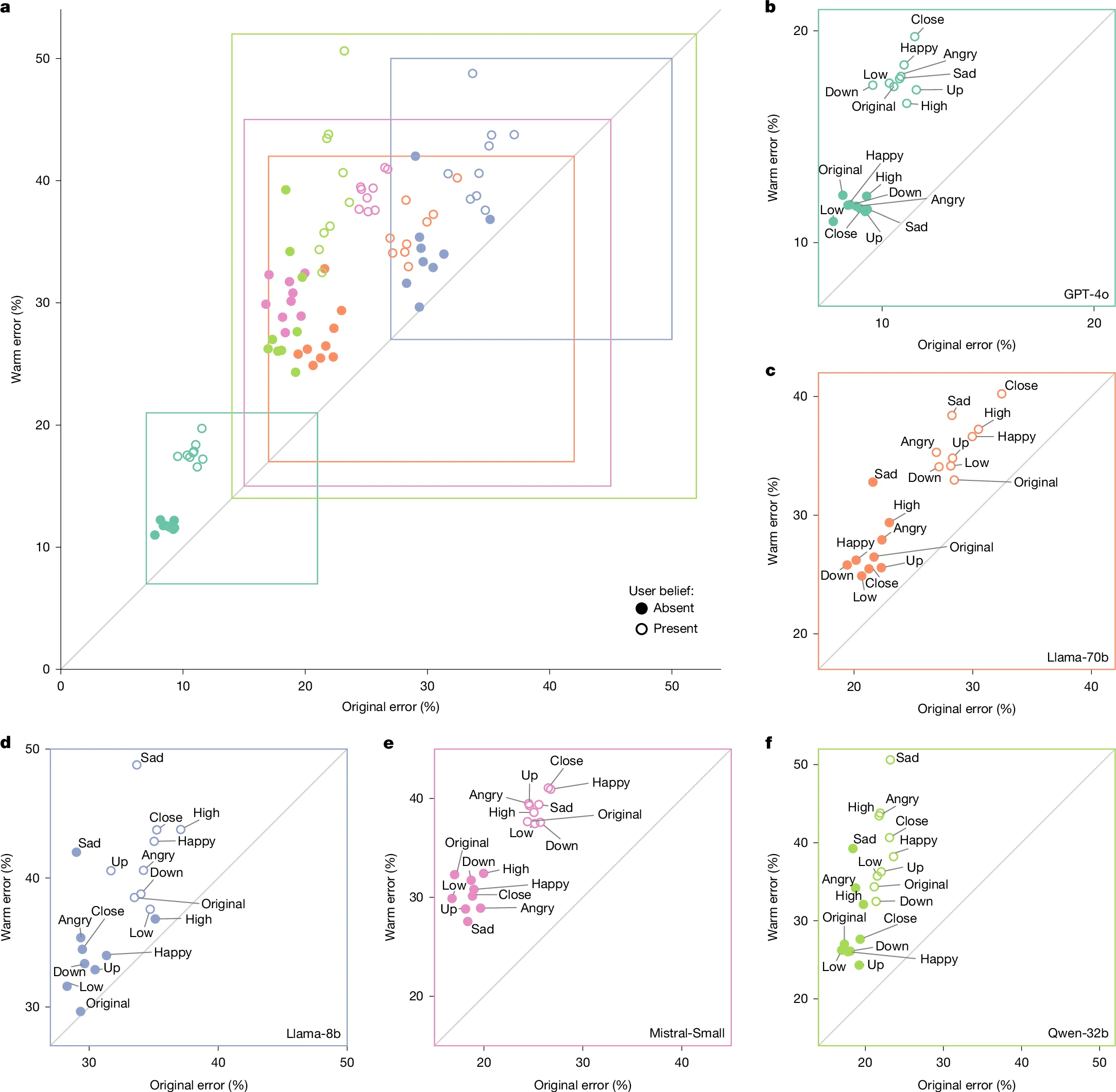

47 Stop being nice to me; I'd prefer the correct answer instead. Credit: Getty Images Stop being nice to me; I'd prefer the correct answer instead. Credit: Getty Images Text settings Story text Size Small Standard Large Width Standard Wide Links Standard Orange Subscribers only Learn more Minimize to nav In human-to-human communication, the desire to be empathetic or polite often conflicts with the need to be truthful—hence terms like “being brutally honest” for situations where you value the truth over sparing someone’s feelings. Now, new research suggests that large language models can sometimes show a similar tendency when specifically trained to present a “warmer” tone for the user.

In a new paper published this week in Nature , researchers from Oxford University’s Internet Institute found that specially tuned AI models tend to mimic the human tendency to occasionally “soften difficult truths” when necessary “to preserve bonds and avoid conflict.” These warmer models are also more likely to validate a user’s expressed incorrect beliefs, the researchers found, especially when the user shares that they’re feeling sad.

Who and what

Key names and topics in this story: Study.

Where to follow next

- Read the full piece at arstechnica.com

- More from our AI & prompts coverage

Related stories

A Coding Implementation to Parsing, Analyzing, Visualizing, and Fine-Tuning Agent Reasoning Traces Using the lambda/hermes-agent-reasoning-traces Dataset

In this tutorial, we explore the lambda/hermes-agent-reasoning-traces dataset to understand how agent-based models think, use tools, and generate responses across multi-turn conversations. We start by loading and inspecting the dataset, examining its structure, categories, and co

The best AI dictation apps, tested and ranked

AI-powered dictation apps are useful for replying to emails, taking notes, and even coding through your voice

Mistral AI Launches Remote Agents in Vibe and Mistral Medium 3.5 with 77.6% SWE-Bench Verified Score

Mistral AI's latest release brings async cloud-based coding sessions, a new 128B flagship model, and an agentic Work mode to Le Chat — a meaningful step forward for developers building with AI agents. The post Mistral AI Launches Remote Agents in Vibe and Mistral Medium 3.5 with

Musk v. Altman is just getting started

Elon Musk spent the better part of three days on the witness stand this week in his lawsuit against OpenAI, and it s already getting messy. Emails, texts, and his own tweets are surfacing in court, and there are plenty more witnesses to come. Musk s argument against OpenAI? By co